It’s been a while since I wrote a short article on my blog, but I felt inspired by a combination of a large coffee, a sunny Saturday morning, and this study: http://www.nutritionjrnl.com/article/S0899-9007(15)00077-5/abstract

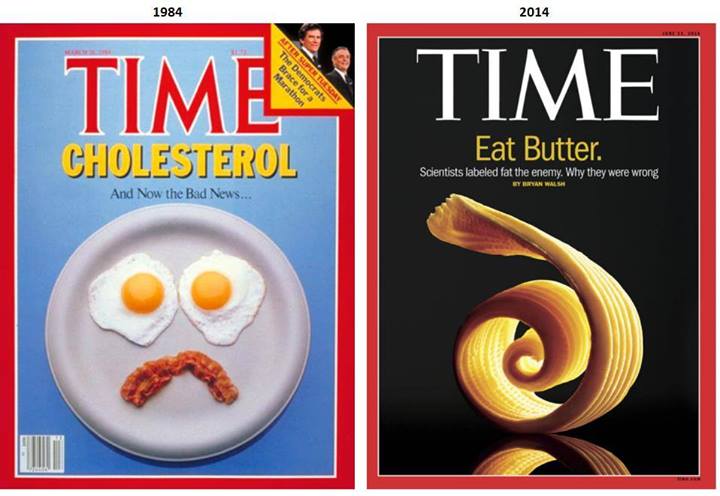

This paper has been released with a large amount of fanfare in the low-carb high-fat (LCHF) community, whom I largely consider myself to be part of, because it uses almost 60 years of population-based observational dietary data to show that:

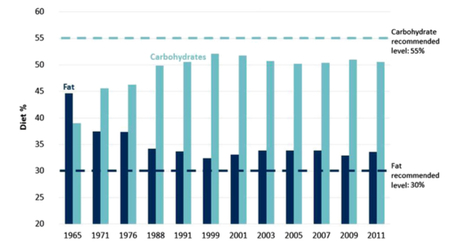

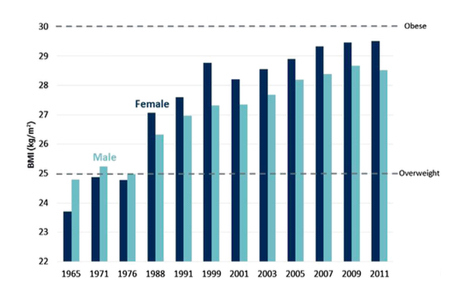

The authors show a strong and damning correlation between the US dietary guidelines and incidence of obesity. In short, people ate how they were told to eat, and it made them fat and sick. The paper is from an open access journal, so I have taken a couple of graphs to show the findings:

This paper has been released with a large amount of fanfare in the low-carb high-fat (LCHF) community, whom I largely consider myself to be part of, because it uses almost 60 years of population-based observational dietary data to show that:

- The US population has been following the recommended dietary guidelines to reduce (saturated) fat intake, and increase carbohydrate intake.

- During that same time period, incidence of diabetes and obesity has dramatically increased.

The authors show a strong and damning correlation between the US dietary guidelines and incidence of obesity. In short, people ate how they were told to eat, and it made them fat and sick. The paper is from an open access journal, so I have taken a couple of graphs to show the findings:

Between the 60s and 90s, fat intake decreased and carbohydrate intake increased, with an impressive increase in BMI over the same time.

When I read the paper, I was immediately faced with a dilemma. I agree with the premise and findings of the paper. Our focus on carbohydrates at the expense of fat, allowing a dominant rise in the consumption of processed carbohydrates and sugars, has absolutely had a huge part to play in the Western obesity epidemic that we see today.*

However, when you get into the details of the paper, things get interesting.

Almost all of the data in this paper comes from the US National Health and Nutrition Examination Survey (NHANES), which has been running for around 60 years. The problem with NHANES is that much of their data comes from thousands of phone conversations, where they ring people up and ask what they ate in the last 24 hours. Then they figure out how representative those 24 hours were compared to normal, and that data is used to determine whether the person’s diet had any effect on their health status 10 or 20 years down the line. But we have good data to show that what people say they eat and what they actually eat does not match up, and this discrepancy is higher in those that are obese. For instance, THIS study showed that self-reported sugar intake does not predict obesity. Instead, if you take urine samples to determine actual sugar intake, this predicts obesity much better.**

So if people are being told to eat a certain way, isn’t it likely that they’ll under-report those foods that they’re not “supposed” to be eating, like humans are prone to doing? Wouldn’t that make it likely that the NHANES data will reflect dietary guidelines? Nobody wants to be found out as the person eating steak and eggs when they’ve been told not to. This may be why, when the NHANES data was analysed recently, the authors concluded that over 60% of the data were “were not physiologically plausible”. That means that the majority of the people in the NHANES study reported eating less food than was physically possible. On average, over 700 calories per day were missing. What was in those calories, we’ll never know!

But what really bothers me is the way that people approach studies based on NHANES data depending on the result. For instance, last year, when a paper found an association between high protein (>20% of calories from protein) diets and risk of death from cancer, critics felt that the biases of the head author (a proponent of a vegan diet) had influenced the findings. The paper used NHANES data, and was torn to shreds by those that disagreed with it, largely because of the poor quality of the NHANES dataset. Many of these same people are now lauding the paper at hand, despite it relying on that exact same data.***

This is absolutely an important paper. But if you want to trust the NHANES data (which to some extent we have to because it’s some of the best data we have), or any data for that matter, you can’t then pick which answers you like and which you don’t.

Science just doesn’t work like that!

If we, as a community, want to start a new era of high-quality nutrition research to counteract that which left us with the confusion and health problems we have today, we have to hold ourselves to a higher standard of work than that which was done previously. This should include acknowledging the limitations of our data, as well as pushing for higher quality studies using higher quality data.

Notes

There are a few additional minor points that should be kept in mind, though they don’t necessarily undermine the quality of the paper:

*For those that want a much more in-depth knowledge of how the US dietary guidelines were bungled, read Death by Food Pyramid by Denise Minger and The Big Fat Surprise by Nina Teicholz.

**The urine test used to determine sugar intake in that study was developed as part of my undergraduate biochemistry research project: http://www.ncbi.nlm.nih.gov/pubmed/18181249

***One caveat is that the current paper uses all 10 NHANES datasets whereas the protein paper only used one. A longer-term trend almost certainly increases the likelihood that the findings are true.

When I read the paper, I was immediately faced with a dilemma. I agree with the premise and findings of the paper. Our focus on carbohydrates at the expense of fat, allowing a dominant rise in the consumption of processed carbohydrates and sugars, has absolutely had a huge part to play in the Western obesity epidemic that we see today.*

However, when you get into the details of the paper, things get interesting.

Almost all of the data in this paper comes from the US National Health and Nutrition Examination Survey (NHANES), which has been running for around 60 years. The problem with NHANES is that much of their data comes from thousands of phone conversations, where they ring people up and ask what they ate in the last 24 hours. Then they figure out how representative those 24 hours were compared to normal, and that data is used to determine whether the person’s diet had any effect on their health status 10 or 20 years down the line. But we have good data to show that what people say they eat and what they actually eat does not match up, and this discrepancy is higher in those that are obese. For instance, THIS study showed that self-reported sugar intake does not predict obesity. Instead, if you take urine samples to determine actual sugar intake, this predicts obesity much better.**

So if people are being told to eat a certain way, isn’t it likely that they’ll under-report those foods that they’re not “supposed” to be eating, like humans are prone to doing? Wouldn’t that make it likely that the NHANES data will reflect dietary guidelines? Nobody wants to be found out as the person eating steak and eggs when they’ve been told not to. This may be why, when the NHANES data was analysed recently, the authors concluded that over 60% of the data were “were not physiologically plausible”. That means that the majority of the people in the NHANES study reported eating less food than was physically possible. On average, over 700 calories per day were missing. What was in those calories, we’ll never know!

But what really bothers me is the way that people approach studies based on NHANES data depending on the result. For instance, last year, when a paper found an association between high protein (>20% of calories from protein) diets and risk of death from cancer, critics felt that the biases of the head author (a proponent of a vegan diet) had influenced the findings. The paper used NHANES data, and was torn to shreds by those that disagreed with it, largely because of the poor quality of the NHANES dataset. Many of these same people are now lauding the paper at hand, despite it relying on that exact same data.***

This is absolutely an important paper. But if you want to trust the NHANES data (which to some extent we have to because it’s some of the best data we have), or any data for that matter, you can’t then pick which answers you like and which you don’t.

Science just doesn’t work like that!

If we, as a community, want to start a new era of high-quality nutrition research to counteract that which left us with the confusion and health problems we have today, we have to hold ourselves to a higher standard of work than that which was done previously. This should include acknowledging the limitations of our data, as well as pushing for higher quality studies using higher quality data.

Notes

There are a few additional minor points that should be kept in mind, though they don’t necessarily undermine the quality of the paper:

- At the end, the journalist Nina Teicholz is acknowledged for her editorial contribution to the paper. Maybe she provided some of the background behind the US dietary guidelines, a topic that she is well versed in. However, as the conclusions from Nina’s book (The Big Fat Surprise) overlap hugely with the conclusions from the paper, one has to ask whether some bias has crept in. Now don’t get me wrong, I agree with Nina on many things. I think you should read her book and learn about her excellent work. But why was her input needed for a scientific paper?

- The paper was written by an economic consulting group, The Brattle Group. The first author (who usually does most of the writing) is an MBA, not a scientist, doctor, or statistician. Who paid The Brattle Group to write this study? That information isn’t mentioned anywhere, and I doubt these guys do stuff for free.

- The paper was also accepted by the journal a week after it was submitted. In the world of peer-reviewed science, this is unheard of. The editor has to find two or three experts in the field, get them to read the paper, and then get the authors to re-write the paper, or answer any queries that the reviewers had. This can take months. I’m not a big fan of conspiracy theories, but I just don’t see how this paper can have gone through rigorous peer review.

*For those that want a much more in-depth knowledge of how the US dietary guidelines were bungled, read Death by Food Pyramid by Denise Minger and The Big Fat Surprise by Nina Teicholz.

**The urine test used to determine sugar intake in that study was developed as part of my undergraduate biochemistry research project: http://www.ncbi.nlm.nih.gov/pubmed/18181249

***One caveat is that the current paper uses all 10 NHANES datasets whereas the protein paper only used one. A longer-term trend almost certainly increases the likelihood that the findings are true.

RSS Feed

RSS Feed